Key takeaways:

- One-time login checks can’t keep up with modern fraud. Passwords, SMS OTP, and knowledge checks fail because they’re static and replayable.

- Attackers win by combining stolen signals with automation, then behaving “normally” until they can change payees, add devices, or transfer funds.

- Continuous trust keeps re-scoring the session using identity, behavior, and context signals.

- Durable security comes from a layered stack: biometrics, voice signals, and AI risk scoring.

- Add extra steps only when the action is risky: new payees, new devices, transfers, or profile changes.

- Keep the stack modular so defenses can change without rebuilding your identity architecture.

- Start where loss concentrates (high-risk payments and call-center account recovery), then expand from there based on measured risk.

Identity fraud has moved from a periodic risk to a high-frequency, high-cost operating reality. Javelin’s 2025 Identity Fraud Study reports that U.S. consumers lost $27.2 billion to identity fraud in 2024, a 19% increase year over year. This hits the financial industry especially hard.

Many financial institutions are still trying to manage yesterday’s fraud with yesterday’s tools: passwords, one-time challenges, and rule sets built for a world where attacks were static, and identities stayed stable. That mismatch keeps widening. Today’s fraud is automated, adaptive, and increasingly designed to look “normal” long enough to pass a one-time checkpoint.

That’s why the real problem isn’t “how do we authenticate better at login,” but how do we keep trust intact throughout the entire customer journey?

Why “verified” doesn’t mean safe anymore

Deepfake voice, stolen credentials, and, more importantly, automation have shifted fraud from break-in attempts to normal-looking sessions.

Here’s a real-world example: a customer passes a selfie check during onboarding or account recovery. The system marks them as “verified,” but a fraudster spoofs that step using a replayed video, a deepfake, or injected camera-stream data.

While everything appears normal, the fraudster can do real damage: change the phone number or email address to take control, add a new payee, and initiate a transfer. Before the customer notices or the bank intervenes, the money is gone. In fact, the system validated the face, but the session wasn’t legitimate.

The standard security assurance model assumes that a single moment of verification can establish trust for everything that follows, creating a clean window for attackers. Once a session looks legitimate, an attacker can move money, change details, add devices, or set up new payees with fewer alarms than a real customer triggers when traveling.

The most widely used protection methods are failing more and more often:

- Passwords

They are easy to reuse, easy to steal via phishing, and they are frequently exposed in data breaches. At an ecosystem level, stolen credentials remain a dominant ingredient in real-world compromises.

- SMS one-time passcodes (OTPs)

SMS OTP depends on the phone number being reliably bound to the right person and device. In reality, attackers can often take over that number via SIM swap/device swap or number porting (moving the number to a new SIM/carrier), which lets them receive the OTP. Because of that, the U.S. National Institute of Standards and Technology advises verifiers to check for specific risk signals before sending OTPs over the public telephone network.

- Security questions

“Knowledge-based” checks age badly: answers can be guessed, socially engineered, or reconstructed from data exhaust (public profiles, breached datasets, and other traces). In practice, that means that real customers get extra steps, while an attacker with enough personal data can still pass.

Attempts to strengthen one-time checks (for example, adding a liveness test or voice match) turn the control into a cat-and-mouse game because it still ends in a single pass or fail moment. In that setup, attackers only need one clean win to get in, while the institution has to hold the line every time that check is run.

This shift from stealing credentials to mimicking the individual challenges the whole concept of static verification. When identity itself can be faked, security isn’t a one-time gate you open — you need to confirm continuously that the user and the session remain legitimate.

The concept of continuous trust. What does it mean?

Continuous trust is a security model where trust is treated as a living score, not a one-time decision.

In static verification, you prove identity at a checkpoint (login, OTP, KBA), then the system largely assumes the session is legitimate until something obviously falls off.

In continuous trust, the institution keeps re-checking “is this still the same legitimate user doing normal, permitted things?” throughout the session and across the journey.

It does this by combining multiple signals, such as:

- Identity signals: biometrics, device binding, cryptographic keys.

- Behavior signals: typing and navigation patterns, transaction flow, and typical cadence.

- Context signals: device, network, geolocation patterns, time, risk history.

- Conversation signals: call-center voiceprint, intent, and anomalies in phrasing or interaction.

- Risk signals: new payee, unusual amount, privilege changes, address or phone changes.

What changes operationally:

- Trust is earned and maintained, not granted once.

- Authentication becomes a step-up when risk rises.

- The goal is to catch the attacker after they pass a checkpoint but before they complete the damaging action.

Financial institutions already collect and analyze huge amounts of data about user activities. Fraudulent actions are just regular operations performed with malicious intent, so extensive behavior analytics helps detect and respond to threats in real time.

Continuous trust expands this concept to identity verification. Instead of the outdated “we checked you at login, and that’s it,” it answers:

- Is this still the same person?

- Is this action consistent with what the real user has done before?

- Has something changed — device, location, behavior, context — that requires a new check?

And what’s also important is that continuous trust doesn’t mean repeatedly prompting users, adding extra friction at every step. It means applying proportionate controls based on risk, letting low-risk activity proceed, and escalating only when signals indicate an increased likelihood of fraud.

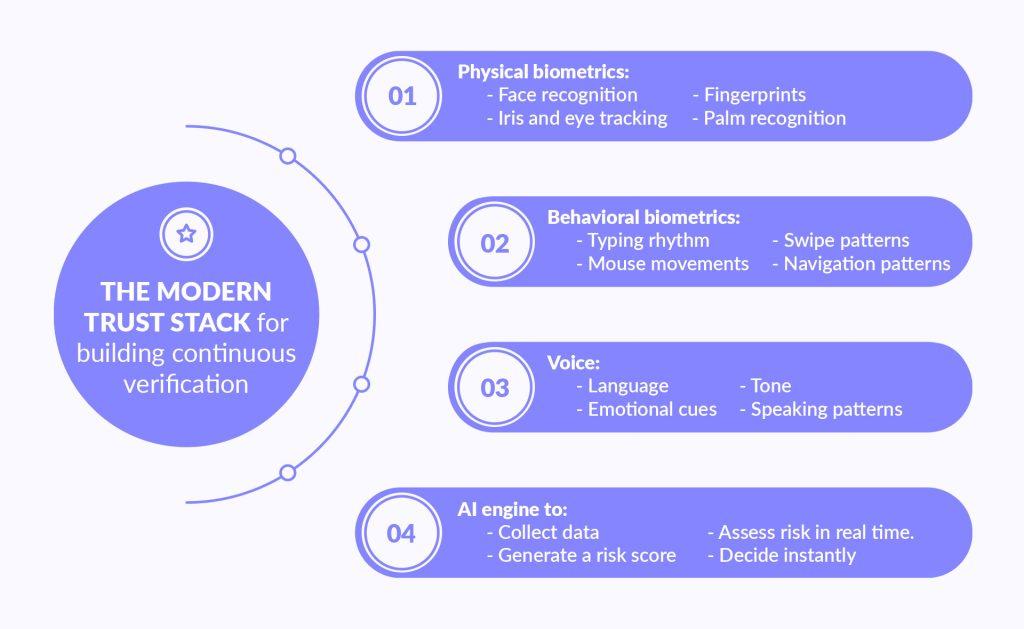

The modern trust stack for building continuous verification

As financial institutions re-architect their security models, they’re not just adding new tools. They’re building a modern “trust stack” based on:

- Who the user is (biometrics)

- How they behave (behavioral patterns, devices, context)

- What they say (voiceprint and conversational intent)

- What AI infers (risk scoring and anomaly detection)

Continuous trust is not one technology, but a layered “trust stack” that combines multiple independent signals so a single bypass doesn’t collapse the entire security model. The goal is to make identity harder to fake, fraud harder to scale, and legitimate journeys smoother.

Layer 1. Biometrics: from fingerprints to behavioral signals

Biometrics now covers both physical traits and behavioral patterns. Physical biometrics include:

- Face recognition

- Fingerprints

- Iris and eye tracking

- Palm recognition

Behavioral biometrics go further by learning how you use your device, such as:

- Typing rhythm

- Swipe patterns and how you hold the device

- Mouse movements

- Navigation patterns

The key change is that behavioral biometrics can run quietly in the background. But it’s powered by big data and still depends on large-scale, real-time analytics. The system analyzes action sequences (behavior graphs) and large volumes of interaction signals in real time to confirm that the same human is still in control throughout the session.

Layer 2. Voice: turning conversations into a security signal

Voice adds identity and intent signals in channels where fraud often succeeds: support calls and conversational experiences. Using speech analysis, financial institutions can assess:

- Language, tone, and emotional cues;

- Speaking patterns and cadence.

As synthetic audio improves, voice security is also shifting toward detection. It uses AI to spot generated or manipulated speech rather than assuming a recording is authentic.

Layer 3. AI as the risk engine

AI is what connects all the layers and makes adaptive security possible. Traditional systems followed fixed algorithms: “If X, then do Y.” It is helpful, but limited in a dynamic threat environment.

AI models ingest many signals at once and produce a real-time risk score for each session. They work by:

- Collecting data from all layers and the current session info.

- Applying learned patterns to assess risk in real time.

- Generating a risk score to decide instantly: allow, challenge, or block.

AI turns the trust stack into a dynamic system that adapts, learns, and acts based on context.

Key principles

No single factor is reliable on its own. Biometrics can be spoofed, devices can be emulated, voices can be synthesized, and rules can be gamed.

What produces durable security is a combination plus context: multiple independent signals evaluated together, continuously, and weighted by the risk of the specific action.

The trust stack should also be modular.

Firms need the ability to swap vendors, add new detection layers, and evolve models as fraud techniques change — without rebuilding the entire identity architecture. This is what keeps security adaptive over time instead of locking the organization into a fixed toolset that ages quickly.

The flip side of the coin is that continuous trust also comes with a cost. To spot suspicious behavior early, companies and institutions need to collect huge amounts of data in real time. They need to be able to correlate session, device, and transaction signals as they happen, and then compare each action against both the customer’s history and known fraud patterns. Continuous trust requires building pipelines that can stream events in real time and detect when a “normal” payment starts to look like a familiar scam.

Additionally, a business needs enough historical data to define what’s normal or not for a specific customer. And it takes a lot of population-level data to recognize repeatable fraud patterns. Without that scale and speed, the system either reacts too late or generates too many false positives.

How leading banks and FinTechs are implementing a modern trust stack: real-life cases

Adoption logically starts in those places where fraud and authentication teams feel pain fastest, such as login, high-risk payments, and call-center verification. And here are a few bright examples.

Revolut passkeys

Revolut describes passkeys as a password alternative that’s generated locally on a user’s device and designed to resist common threats like phishing and credential enumeration.

In practice, this shifts the baseline from “something you know” to device-bound authentication. It can be unlocked with a biometric scan or device PIN and then combined with risk checks for sensitive actions.

Square’s ML-based risk manager

Square describes its risk evaluation as a fraud-risk determination generated by proprietary machine learning models. This evaluation is produced per transaction and can be used to decide whether the activity looks normal or suspicious in real time.

On the control side, the Square Risk Manager tool lets businesses operationalize those signals with actions such as declining a payment, triggering a risk alert, or invoking 3D Secure for step-up verification when conditions are met.

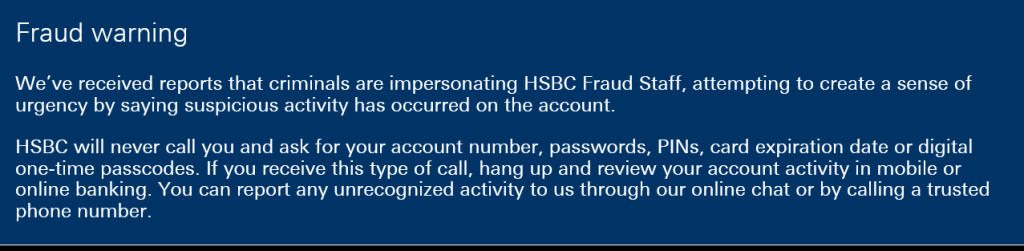

HSBC Voice ID

HSBC Bank USA’s Voice ID is presented as voice-based authentication, aiming to shorten or simplify call-center verification. The feature analyzes a client’s voice in seconds and checks over 100 behavioral and physical vocal traits.

HSBC assures that Voice ID is sensitive and sophisticated enough to detect if someone is impersonating you or playing a recording.

PayPal’s real-time graph analytics (U.S.)

PayPal has been using a real-time graph database to model relationships between accounts and “assets” such as IPs, addresses, and device identifiers. That graph structure helps surface risk that’s hard to see in isolation: unusual asset sharing, suspicious transaction patterns, and connected communities that can indicate coordinated fraud rings or account takeover activity.

PayPal uses the graph like a “relationship map.” When a payment or login happens, the system checks what that account is connected to — shared devices, reused IPs, repeated addresses, or links to already-flagged accounts. If the graph shows a dense cluster of risky connections (for example, many accounts using the same device or quickly moving money through linked recipients), PayPal can treat the action as higher risk and trigger stricter controls.

The flip side

These cases also show another reality: even strong authentication can’t fully protect customers when attackers shift to social engineering. Fraudsters adapt to whatever channel works fastest.

A good example is HSBC Bank, which we have mentioned before. At the time of writing this blog post, its website displayed an active pop-up warning customers about criminals impersonating bank fraud staff (see screenshot below). The message explicitly tells customers not to share credentials or one-time passcodes — an illustration of how banks pair verification technologies with real-time, contextual guidance to reduce “legitimate-looking” scams that bypass classic security checkpoints.

What’s holding financial firms back from implementing modern-age security systems?

Legacy systems and fragmented stacks make it hard to unify identity, fraud, and transaction data into a single, real-time risk view. Many teams also avoid change because they expect security upgrades to damage UX.

On top of that, continuous trust needs specialized engineering and AI operations that some institutions don’t have in-house.

These are some of the most widespread reasons for low adoption of continuous trust.

Related article: AI ideation workshop

Practical example: why a “real-time risk score” project stalls

A mid-size bank decides to introduce an AI risk engine that scores each session and triggers step-up verification for high-risk actions. The plan depends on seeing the customer journey end-to-end in real time.

In practice, the required signals are scattered: device telemetry is owned by the mobile team, transactions are in the core platform, and service interactions are in a separate CRM. Even if a prototype model works in a notebook, the bank may lack production feature pipelines, monitoring, drift controls, incident response, etc.

As a result, the model can’t be safely deployed, or it gets switched off after a few false-positive incidents.

How Geniusee helps FinTechs build and implement a modern trust stack

Obviously, companies don’t need another point solution here. What they need is a trust stack that fits their product, integrates with what already exists, and can evolve as fraud tactics change.

Geniusee helps by treating identity and fraud as an end-to-end system, backed by our extensive experience with FinTechs. This is how it happens:

Product and implementation design

- Conducting deep audits of onboarding, authentication flows, and the existing defense stack.

- Identifying high-impact integration points for biometrics, voice, and AI.

- Designing workflows and customer journeys that align UX, security, and compliance.

Building and integrating

- Seamlessly integrating KYC, biometric, and fraud detection APIs into core systems.

- Implementing risk scoring logic, real-time monitoring, and secure data flows.

- Implementing real-time big data pipelines (event streaming from web/mobile/core systems) to aggregate behavioral, device, and transaction signals and power continuous risk scoring with low latency.

- Building by focusing on interoperability, auditability, and data privacy.

Owning evolution

- Handling full implementation from integration to testing and launch.

- Setting up and refining data pipelines and MLOps to support continuous improvement.

- Running optimizations based on UX and fraud metrics.

Whether you need a partner to implement new systems, modernize old ones, or handle it all so your team can stay focused, we shape our approach to fit what you actually need.

Conclusion

Passwords and one-time checks were built for a slower threat model. In today’s environment, where attackers can steal credentials at scale and increasingly impersonate real people, financial security needs to move toward continuous trust. It is an ongoing, risk-based verification that adapts to changing behavior, device signals, context, and channel.

A modern trust stack combines biometrics, voice signals, and AI-driven risk decisions to reduce fraud without adding blanket friction. The institutions that progress fastest treat it as a modular capability: start with high-impact journeys, integrate signals across systems, and iterate using measurable fraud and UX outcomes.

If you’re planning to implement AI-based risk scoring, strengthen onboarding and authentication, or modernize fraud prevention across digital and support channels, contact Geniusee’s experts to discuss an implementation roadmap tailored to your product, architecture, and compliance constraints.