US consumers reported losing $12.5 billion to fraud in 2025 — a 25% jump from the prior year, driven not by more fraud reports but by a higher proportion of people actually losing money when targeted. That’s the baseline FinTech companies are operating against right now. The threat isn’t slowing; it’s getting more precise, more automated, and increasingly AI-native.

AI FinTech risk management is the operational response. Not as a trend or a pilot programme, but as the architecture that separates financial institutions that can manage this environment from those that can’t.

Article highlights

- Fraud is getting more precise and more automated — and traditional batch-review systems leave a window that modern attacks exploit in milliseconds

- Only 29% of financial institutions have AI compliance measures aligned with the EU AI Act, while DORA enforcement is already underway for any fintech touching EU operations

- Deploying AI decisioning without governance infrastructure is the most expensive mistake in fintech risk management — the technology is easy; the audit trails, escalation paths, and model documentation are what get skipped

Why traditional risk management breaks down in FinTech

Most risk frameworks in financial services were designed around a quarterly cadence — periodic audits, batch fraud reviews, and annual compliance assessments. That tempo made sense when transactions were slower, product surfaces were stable, and fraud tactics were largely manual.

None of those conditions hold anymore. Back in 2022, organisations reported acting to manage an average of two AI-related risks. That number has doubled to four risks today, according to McKinsey’s State of AI research — and that’s just the risks organisations are actively tracking. The ones they aren’t tracking are the problem.

Rules-based systems are brittle. A fraud detection rule calibrated to your current transaction mix fails the moment you onboard a new merchant category or launch a new product. A compliance checklist built for GDPR doesn’t automatically accommodate DORA. Every exception requires manual triage, and manual triage doesn’t scale to the volume and velocity that modern FinTech operations demand.

The data from PwC’s 2025 Global Compliance Survey is striking: 85% of respondents report that compliance requirements have become more complex in the last three years, and 82% of companies plan to increase investment in at least one technology to automate and optimise compliance activities. Compliance & Risks The investment is happening. The governance frameworks to support it often aren’t.

What does AI actually change in FinTech risk management?

The functional shift is from periodic review to continuous monitoring. AI systems process transactions, flag anomalies, and cross-reference FinTech regulatory requirements in real time — not in batches run overnight. That’s not incremental improvement. It’s a different operating model.

Banks using advanced AI models report fraud detection accuracy exceeding 90%, and AI-based fraud systems are projected to save global banks over £9.6 billion annually by 2026. Those figures come from institutions that have rebuilt their fraud detection architecture around ML pipelines — not from ones that layered a tool onto an existing rules engine.

The risk categories AI addresses in FinTech are distinct:

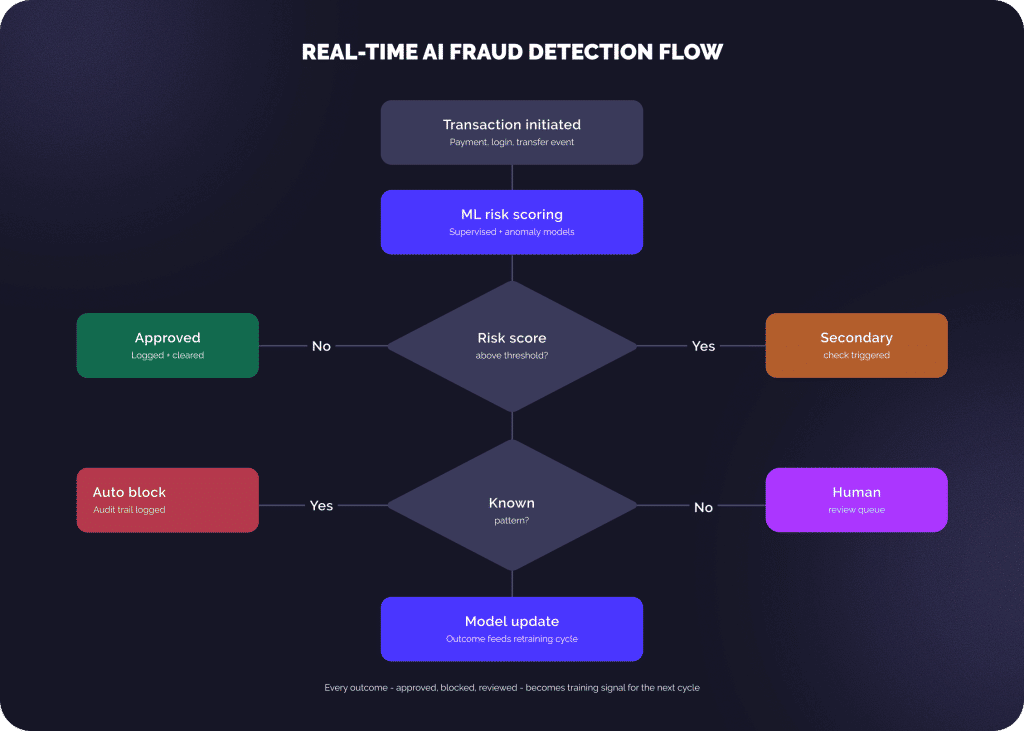

Fraud detection and financial crime

AI models trained on historical transaction data identify patterns that human analysts and rule-based systems miss. Supervised models catch known fraud patterns; unsupervised anomaly detection identifies new attack vectors before they’re formally categorised. The combination is what makes real-time fraud detection viable at scale.

Regulatory compliance and AML

The Financial Conduct Authority in the UK has identified AML and fraud prevention as among the areas offering the greatest perceived benefits for AI adoption in financial services. Deloitte AI can scan regulatory publications, map new obligations to internal policies, and flag compliance gaps before a regulator does, compressing workflows that used to take compliance teams weeks.

Cybersecurity and data protection

More than half of technology decision-makers in EY’s 2025 Technology Risk Pulse Survey anticipate leveraging AI for IT infrastructure (59%) and cybersecurity (58%) within the next 18 to 24 months. The threat environment demands it: synthetic identity fraud, deepfake-assisted onboarding attacks, and AI-generated phishing campaigns are now standard operating conditions, not edge cases.

Operational risk and third-party exposure

Every vendor integration is a potential attack surface. Every third-party data feed is a compliance dependency. AI-driven continuous monitoring of vendor behaviour and system anomalies replaces the annual audit model that most FinTech companies still rely on.

| Characteristic | Traditional | AI-driven |

| Detection speed | Batch review — hours later | Real-time — milliseconds |

| Fraud detection | Rule-triggered, brittle | Pattern + anomaly-based |

| Regulatory compliance | Manual, periodic audits | Automated, continuous |

| Third-party risk | Annual vendor audit | Continuous vendor scoring |

| Adaptability | Manual update required | Learns from new data |

What’s the regulatory environment requiring in 2026?

This is where we see the sharpest disconnect between what FinTech teams are building and what they’re actually obligated to deliver. The RegTech in finance picture has shifted significantly since 2024, and a lot of teams are still planning against the old one.

DORA — the baseline for EU-adjacent operations

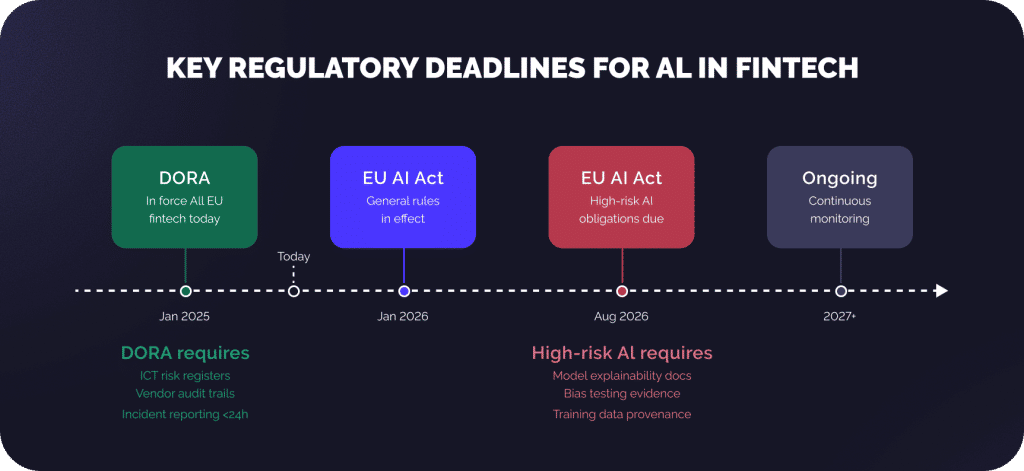

DORA became fully applicable on January 17, 2025, binding financial entities and their ICT providers to end-to-end operational resilience practices across five core areas: ICT risk and governance, incident response and reporting, third-party risk management, digital resilience testing, and information sharing. Penalties reach up to 2% of annual worldwide turnover, not a theoretical risk for FinTech companies doing business with EU institutions.

The practical implication: if you can’t generate a complete audit trail of a third-party ICT incident within the required timeframe, your vendor management process has a DORA-compliance gap. Many FinTech startups that launched before 2024 do.

The EU AI Act — explainability becomes law

The EU AI Act categorises credit scoring, fraud detection, and AML systems as high-risk AI applications. For those systems, the Act mandates risk management systems that identify, analyse, and mitigate risks throughout the AI lifecycle, data governance ensuring training datasets are relevant and representative, and – for models with systemic risk – adversarial testing and incident reporting.

The August 2026 deadline for high-risk AI obligations is the date that matters. FinTech companies using black-box ML models for credit decisions or fraud scoring need to audit those models, document training data provenance, and demonstrate bias testing before that date. Only 29% of financial institutions have implemented AI compliance measures, which means the majority are running a compliance risk they haven’t fully assessed.

Model governance as a regulatory expectation

Germany’s BaFin made the regulatory direction explicit in December 2025: artificial intelligence is no longer primarily an innovation or ethics issue, but explicitly part of ICT risk management under DORA. AI governance is not an innovation project, but a permanent operating state that must be strategically managed, organisationally anchored, and operationally implemented.

That framing matters. It closes the argument that model governance is a compliance nicety. It’s now a supervisory requirement with teeth.

How does an effective AI risk management framework actually work?

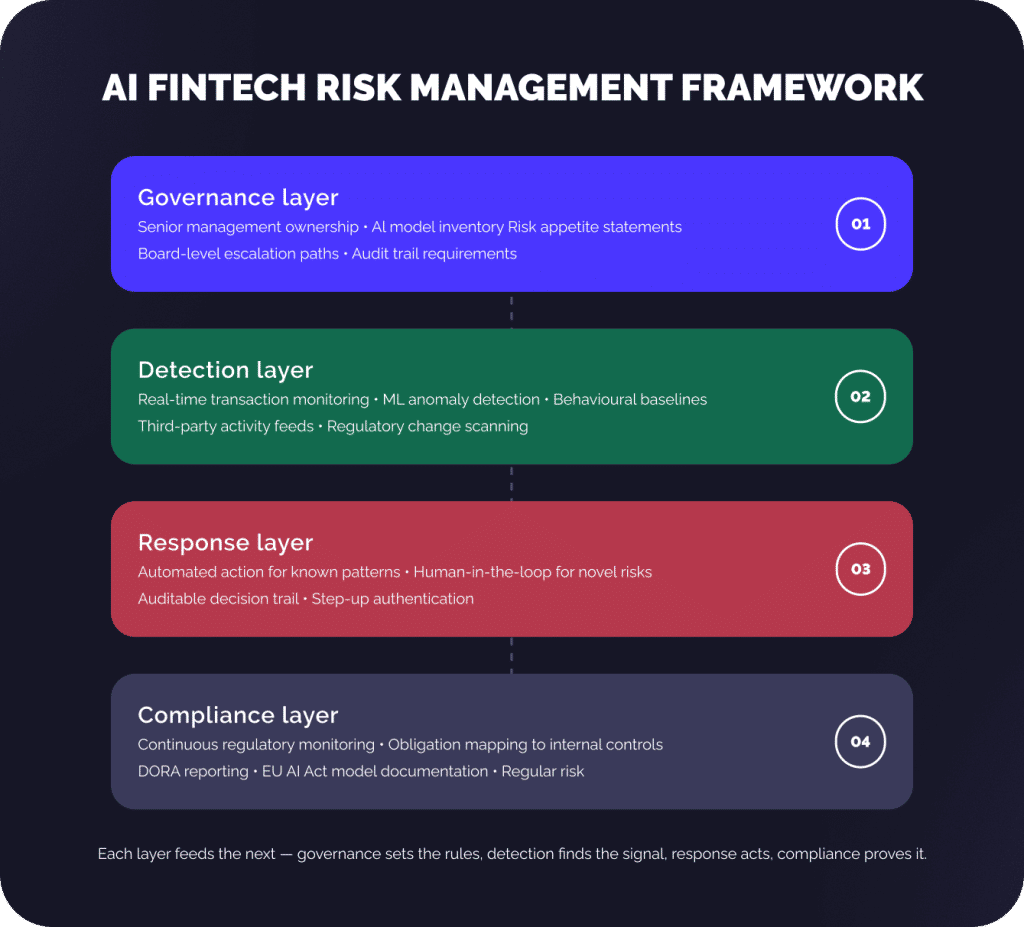

I’d recommend thinking about it in four connected layers, not as separate initiatives.

- Governance layer. Senior management ownership — not delegated to a compliance team, but owned at the executive level. Board oversight of AI has tripled in one year among Fortune 100 companies, rising from 16% to 48% in 2025, according to EY’s Center for Board Matters research. That shift isn’t PR. It reflects regulators signalling that they expect AI risk to live at the board level. Practically, this means maintaining a documented AI model inventory — what each model does, what data it was trained on, who owns it, when it was last audited.

- Detection layer. Real-time monitoring across transactions, user behaviour, third-party activity, and regulatory feeds. This is where your ML pipelines operate. The systems that work best combine supervised models trained on labelled fraud data with unsupervised anomaly detection, so you catch both known patterns and genuinely novel attack vectors.

- Response layer. Automated responses for known risk patterns, human-in-the-loop escalation for novel ones. PwC’s 2025 Responsible AI Survey found that 56% of organisations now place Responsible AI responsibility in first-line teams — IT, engineering, and data — rather than centralised compliance committees. That structural shift matters: governance happens where decisions are made, not in a separate review cycle. Every automated action should generate an auditable decision trail.

- Compliance layer. Continuous regulatory monitoring with automated mapping of new obligations to internal controls. Generative AI can help compliance teams ask fact-based questions, compare and extract requirements from multiple regulations, assess compliance gaps by comparing regulations to internal policies, and communicate changes through intuitive interfaces Deloitte Insights, per Deloitte’s financial services research. This is what we’d call the highest AI ROI application in FinTech compliance right now, compressing workflows that used to require significant legal and compliance headcount.

What role does due diligence play in AI risk management for FinTech?

Due diligence in this context operates on two fronts simultaneously. External: the ongoing assessment of vendors, data providers, and technology partners before and after FinTech integration. Internal: the continuous review of your own AI models to ensure they’re performing as intended and haven’t drifted from their original calibration.

External due diligence is now a regulatory requirement, not just prudent practice. Under DORA, financial entities must maintain detailed registers of all third-party ICT providers, conduct structured vendor assessments, and document exit strategies for critical dependencies. The regime extends to subcontractors — not just direct vendor relationships.

Internal due diligence is where we see the most underinvestment. Many banks are still struggling to validate the performance of AI models because there’s often no way to back-test them against historical scenarios, according to McKinsey and IACPM’s joint research on generative AI in credit risk. A fraud detection model trained in 2023 may have significant blind spots against 2026 attack vectors. Regular risk assessments of your AI systems aren’t bureaucratic overhead — they’re how you maintain customer trust and satisfy the model risk obligations that regulators now explicitly apply to AI.

According to PwC’s research, early-stage involvement of compliance experts can reduce the likelihood of regulatory breaches by over 70%. That’s not an argument for slowing down AI FinTech development. It’s an argument for integrating compliance expertise into the product and engineering cycle from the start.

What are the most common mistakes FinTech companies make with AI risk management?

The most expensive one is deploying AI decisioning systems without governance infrastructure. The technology is genuinely not that hard to implement. The audit logs, escalation paths, model documentation, and human review checkpoints that regulators require are harder and less interesting to build — and they’re the ones that get skipped.

Only 7% of financial services followers feel highly prepared in terms of governance and risk management practices when adopting generative AI, according to Deloitte’s research on generative AI adoption in financial services. That gap between deployment enthusiasm and governance readiness is exactly where regulatory exposure accumulates.

The second pattern worth calling out: treating compliance as a point-in-time exercise rather than a continuous monitoring function. Nearly 90% of compliance executives report that the breadth of their compliance responsibilities has increased in the last three years. The scope keeps expanding. An annual compliance review is no longer a viable response to a regulatory environment that updates continuously — particularly with the EU AI Act introducing phased obligations through August 2026 and DORA enforcement already underway.

The FinTech companies managing this well treat risk management as a product function. The risk team sits alongside engineering. Risk requirements go into the product backlog. Model governance is a continuous deployment concern, not a quarterly review.

What is AI FinTech risk management, and how does it differ from traditional risk management?

AI FinTech risk management uses machine learning, real-time monitoring, and increasingly autonomous systems to identify, assess, and respond to financial, operational, and compliance risks continuously — rather than through periodic manual review.

The practical difference: traditional batch-based fraud detection reviews transactions hours after they occur; AI-driven systems flag and respond in milliseconds. The governance challenge is that autonomous systems require their own risk frameworks — model inventories, audit trails, and human oversight protocols — that traditional risk approaches don’t address.

Which regulations apply to AI systems in FinTech in 2026?

The two most consequential are DORA and the EU AI Act. DORA, in force since January 2025, mandates comprehensive ICT risk management, third-party oversight, and incident reporting for financial entities and their technology providers operating in the EU.

The EU AI Act classifies credit scoring, fraud detection, and AML systems as high-risk AI — requiring explainability, bias testing, and data governance documentation, with full obligations effective from August 2026. In the US, the OCC’s model risk management guidance (SR 11-7) remains the baseline, with FDIC and Federal Reserve oversight extending to AI systems by application of existing supervisory frameworks.

What does explainable AI mean in a FinTech risk context, and why does it matter?

Explainable AI means a model’s decision can be traced back to specific inputs and logic — so a credit denial can be audited, a fraud flag can be defended in a regulatory review, and bias in outcomes can be identified and corrected. It matters operationally because unexplainable models are fragile: when they fail, you can’t diagnose why, and you can’t fix the underlying issue.

It matters legally because the EU AI Act and existing fair lending regulations in multiple jurisdictions require institutions to provide specific reasons for adverse decisions. A black-box model that produces outputs you can’t explain is a liability in both contexts.

How should FinTech companies approach third-party risk management under DORA?

Treat it as continuous monitoring for reputational risks, not a periodic audit. DORA requires maintaining detailed registers of all third-party ICT providers, including subcontractors for critical functions, with documented contract terms covering audit rights, incident reporting obligations, and exit arrangements.

The practical starting point: map every external integration to its risk classification, identify which vendor relationships would trigger DORA reporting obligations if they failed, and build automated monitoring of vendor performance and security posture where the risk profile justifies it. Documentation of exit risk management strategies is a formal requirement, not optional.

What’s the right governance structure for AI risk in a FinTech company?

The structure that regulators now expect (and that high-performing firms are actually implementing) places ownership at the senior management or board level, with execution accountability in first-line teams. The AI model inventory lives in the governance layer: every model is documented with its purpose, training data, ownership, and last audit date.

Risk appetite decisions about autonomous AI agents in finance actions belong at the executive level; automated responses to known risk patterns can be first-line. The critical element is that human oversight escalation paths exist and are tested, not just documented.

Is AI itself a source of risk in FinTech, and how should it be managed?

Yes, and this deserves more attention than it typically gets. AI models introduce model risk (performing differently than intended), data bias risk (unrepresentative training data producing skewed outputs), and operational risk (autonomous decisions triggering unintended consequences at scale).

The AI systems managing your financial risk need their own governance: regular audits, drift monitoring, and defined human review checkpoints for high-impact decisions. McKinsey’s research on generative AI in credit risk specifically flags hallucination risk in LLMs as a material concern for use cases requiring high accuracy — which is most FinTech risk applications.

Building AI governance into your operating model from the start is substantially less expensive than retrofitting it under regulatory pressure.