Efficiency

Custom LLMs are faster and more accurate at solving your specific tasks because they’re fine-tuned to your data, workflows, and objectives. Unlike public models like OpenAI’s, which charge based on usage volume, custom LLMs offer more predictable costs based on actual resource usage.

Domain knowledge

A custom-trained LLM built on your internal data understands your context, terminology, and industry specifics far better than generic models. This means more relevant outputs, fewer errors, and better alignment with your business goals.

Security

With custom LLM, your data stays yours. You control the training environment and avoid risks of data leakage, third-party exposure, or your proprietary information ending up in someone else’s model.

Fintech

With our integrated solution approach, you can flawlessly link different business-critical applications. Whether you need ERP, SCM, CRM, e-commerce, or specialized niche platforms like document management and manufacturing execution systems, we unify these diverse tools into a single digital conglomerate.

Edtech

We develop LLM solutions for automating legal agreements, content generation, managing student data privacy, and supporting regulatory compliance.

Retail

Improve contract management, protect customer data privacy, and ensure AI-powered regulatory compliance across global markets.

Real estate

Real estate businesses leverage LLMs to streamline property listings, tenant screening, property management, and transactions.

With years of experience in AI development, we help you gain more control, better performance, and secure AI adoption tailored to your business infrastructure.

Domain expertise

Up to 2–3x better results on your queries. Fine-tuned LLMs understand your industry-specific language, internal context, and data structure. That’s why you deliver only relevant and accurate outcomes.

Reproducible results

You get 100% consistent performance. Unlike third-party models that may change over time without notice, your own LLM delivers stable, reproducible outputs you can rely on for critical workflows.

Cost predictability

You don’t pay for data volume — you pay for the servers running your models. This gives you full cost transparency, especially at scale. With large datasets, you can accurately predict how much your LLM will cost per month — 100% pricing clarity.

Security

0% of your data leaves your environment. Unlike public models, your data stays within your private infrastructure and cannot be accessed or used for external training. You retain full control and ownership.

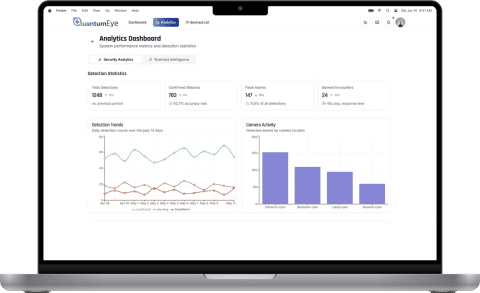

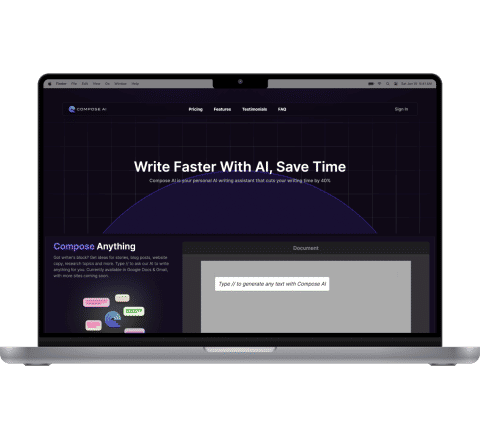

Our portfolio

LLM Models

LLM as a Service

LLM Infrastructure

1 step – Defining objectives

During this phase, we define your specific needs and goals, market challenges, the context in which the model will operate, and use cases that the LLM will address. By the end of it, we will set clear objectives and success criteria.

2 step – Data preparation

Our team helps you with data collection. We prepare annotated datasets optimized for model training and fine-tuning by cleaning and annotating them. This involves resolving inconsistencies, handling missing values, and labeling data for the LLM to learn from. The outcome is a refined, high-quality dataset optimized for training your model.

3 step – Model development

Next, we select the most suitable LLM architecture based on your needs, then train and fine-tune the model using your prepared data to maximize performance.

Afterwards, we evaluate the model to ensure it meets your target metrics. This process includes multiple iterations of training, evaluation, and improvement.

4 step – Deployment and maintenance

Once tested, we deploy your custom LLM to a scalable infrastructure. We handle ongoing hosting, maintenance, and support to keep your model up-to-date. As your data and use cases evolve over time, we can retrain and enhance the model to ensure continued high performance.

Genuisee’s versatile experience, gained over more than 8 years, has enabled us to form a team with a proven track record.

Certified AWS Partner delivering secure, scalable cloud-native solutions.

ISO-compliant processes ensuring quality, security, and reliability.

Trusted integration partner for financial data connectivity and open banking.

Team of ISTQB-certified QA engineers for world-class software testing.

Consistently rated ★5.0 by clients for reliability and delivery excellence.

Accredited partnership supporting advanced testing and continuous QA automation.