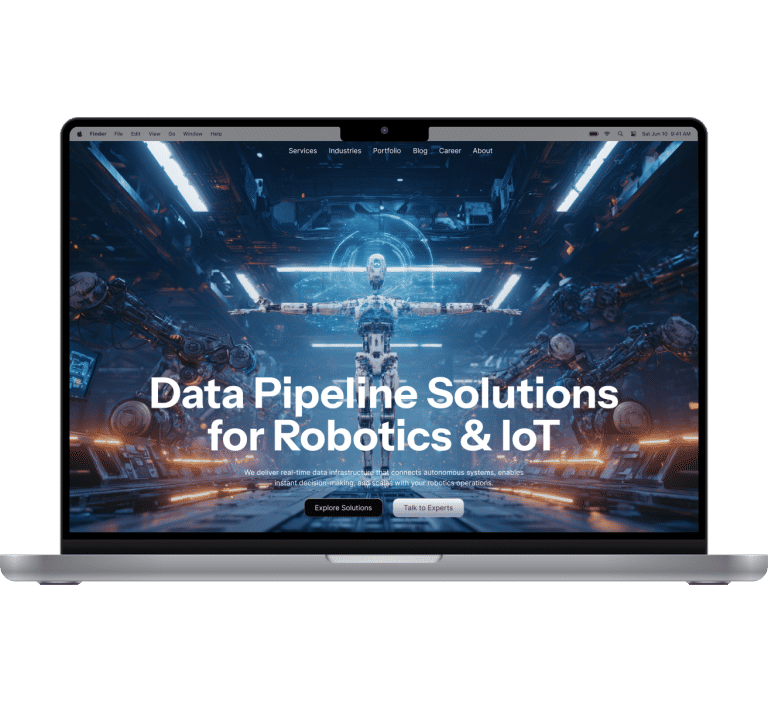

About the client

Our client operates at the intersection of robotics, automation, and large-scale data processing. Their platform combines autonomous robots, warehouse-as-a-service capabilities, and real-time data infrastructure to help enterprises streamline fulfillment and optimize operational throughput. With robots generating massive data streams every second, the client’s ecosystem relies on fast, reliable, and cost-efficient data pipelines to unlock real-time insights and keep high-velocity warehouse operations running smoothly.

Business context

The client approached us with a clear objective: strengthen the data-processing backbone that powers their fleet of robots. Their existing system struggled to ingest and structure real-time machine data at scale, limiting operational efficiency. Leveraging our data engineering expertise, we architected a high-throughput pipeline that captures and processes information in real time, feeds it into a unified data warehouse, and supports more reliable robot performance and smarter resource allocation.

The system had to capture robot-generated data with high accuracy and near-zero delay to support real-time operations.

Data gaps across multiple sources created inconsistencies that required a more reliable collection and validation flow.

Frequent workload spikes exposed weaknesses in system stability, pushing the need for a more resilient foundation.

The client needed a cloud architecture that could handle up to 10 GB/s of incoming data without driving costs out of control.

Infrastructure performance had to remain stable regardless of data volume, which required a design free from throughput bottlenecks.

Solutions we implemented

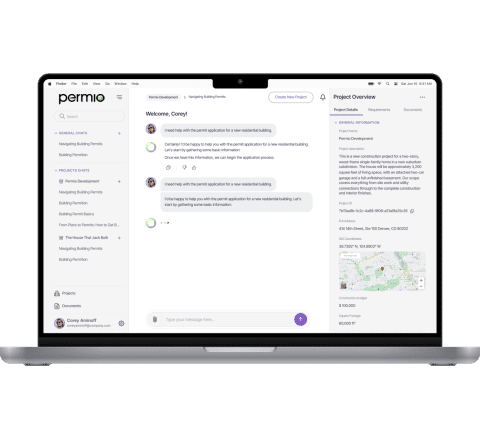

Product development

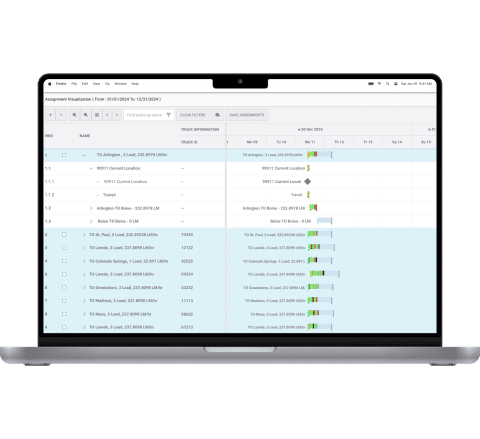

Our team designed and built a data pipeline capable of handling diverse, high-velocity inputs from IoT devices, scanners, CRM systems, internal databases, and third-party services. The solution processes data in real time, routes it into dedicated data lakes, and prepares it for analytics dashboards and operational metrics.

Business analysis

We began with a detailed discovery and scoping phase, outlining all data sources, dependencies, and expected workloads. The team created a work breakdown structure (WBS) for the MVP to align priorities with the client, reduce rework, and establish clear acceptance criteria and milestones for development.

Architecture and infrastructure development

Our engineers defined the target architecture and delivery workflows. The solution was built around cloud microservices to support isolation, cost efficiency, and scalability. Internal services communicate securely without external exposure, improving both performance and safety.

Data engineers and DevOps specialists synchronized the pipeline architecture with automated data-quality checks, ensuring validation at each processing stage.

Design of the system

This stage focused on system behavior rather than UI. We mapped how data should flow through the pipeline from every source to storage, processing, and analytics. Based on these workflows, the team defined all system components, infrastructure elements, and processing rules. Figma was used to visualize end-to-end architecture for both teams.

MVP development and validation

We started with a 1–2 day proof of concept followed by 1–2 weeks of testing to confirm feasibility and refine the development roadmap. The first full MVP was delivered in three weeks and monitored for a month under gradually increasing data loads. Real user feedback shaped rapid iterations and performance improvements.

System monitoring

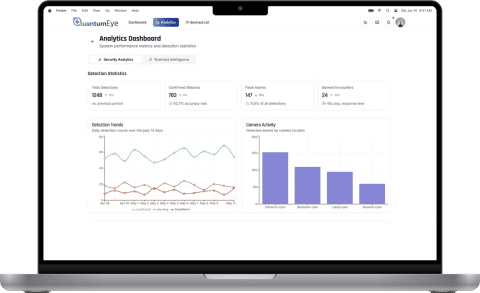

We implemented continuous monitoring that tracks key data-flow metrics and validates the health of every pipeline component. When anomalies occur — such as stalled extraction or irregular data intervals — automated alerts are pushed to Slack and email to ensure rapid response.

Automated data quality control

The pipeline includes a structured validation process that compares raw input data with processed production outputs across defined time windows. This ensures completeness, identifies empty or corrupted records, and triggers analytics only when data passes all quality checkpoints.

Microservices-based architecture

The solution is built on isolated microservices that handle ingestion, processing, storage, and validation independently. This approach improves fault tolerance, shortens deployment cycles, and allows each component to scale without affecting the entire system.

Third-party integrations

The pipeline seamlessly interacts with multiple external and internal systems, including Kafka streams, CRM platforms, proprietary databases, and IoT devices. This unified integration layer supports consistent data flow across all endpoints.

Pipeline stability alerts

A dedicated alerting mechanism detects when the pipeline is idle, blocked, or missing expected data. These real-time notifications help prevent service disruption and maintain uninterrupted robotic operations.

MVP delivered in one month

Our team shipped the first operational version of the data pipeline within a month, giving the client a fast track to real-world validation.

Stable high-load data processing

The improved pipeline now handles continuous multi-source ingestion without data gaps, enabling robots to operate on complete and timely information.

Automated issue detection across the ecosystem

We introduced monitoring and alerting that instantly flags pipeline stalls, data delays, or quality issues, significantly reducing downtime risks.

Streamlined pipeline development workflow

The collaboration resulted in a more predictable development cycle, backed by PoC testing, roadmap planning, and transparent performance metrics.