Computers work great with structured information, such as tables in databases. But people communicate with each other, not with tables, but with words. For computers, this is too complicated.

Most of the information in the world is not structured – it’s just texts in English or any other language. Can machines be taught to extract important data from them? This problem is addressed in a special direction of artificial intelligence: natural language processing, or NLP. In the article, we will understand how it works.

Can computers understand the language?

From the very beginning of the computer age, developers have been trying to teach them to understand ordinary languages, for example, English. For thousands of years, people wrote something, and it would be great to instruct machines to read and parse all this data.

Unfortunately, computers cannot fully understand living human language, but they are capable of much. NLP can do truly magical things and save a huge amount of time.

Extraction of meaning

The process of reading and understanding the English text is very complicated. In addition, people often do not follow the logic and sequence of narration. For example, what does this news headline mean?

“Environmental regulators grill business owner over illegal coal fires”.

Do regulators interrogate business owners about illegal coal-burning? Or maybe they literally cook it on the grill? Did you guess? Whether a computer can?

The implementation of a complex task with machine learning usually means building a pipeline. The point of this approach is to break the problem into very small parts and solve them separately. By combining several of these models that deliver data to each other, you can get great results.

NLP pipeline step by step

Let’s examine the following excerpt from Wikipedia:

“London is the capital and most populous city of England and the United Kingdom. Standing on the River Thames in the south-east of the island of Great Britain, London has been a major settlement for two millennia. It was founded by the Romans, who named it Londinium.”

This passage contains valuable facts—London is a city, it’s in England, and it was founded by the Romans. But before a computer can extract these ideas, we must teach it the basic structure of written language. That is what the NLP pipeline is for.

Step 1: Sentence Segmentation

The first stage is splitting the text into individual sentences:

London is the capital and most populous city of England and the United Kingdom.

Standing on the River Thames…

It was founded by the Romans…

Each sentence represents one thought. It’s easier to analyze units this small than a full paragraph. While simple punctuation rules can work, modern NLP uses more advanced, context-aware methods.

Step 2: Tokenization

Next, we split each sentence into words or tokens. Using the first sentence:

London is the capital and most populous city of England and the United Kingdom.

Tokenization produces:

“London”, “is”, “the”, “capital”, “and”, “most”, “populous”, “city”, “of”, “England”, “and”, “the”, “United”, “Kingdom”, “.”

In English, spaces often separate tokens, but punctuation is also treated as a token because it carries meaning.

Step 3: Part-of-Speech Tagging

Now we determine the part of speech for each token—noun, verb, adjective, etc. Models trained on millions of annotated sentences help classify words based on their context.

For example:

London → Proper Noun

is → Verb

the → Article

capital → Noun

and → Conjunction

The model does not understand meaning—it relies on statistical patterns from training data.

Step 4: Lemmatization

Words often appear in various forms. Lemmatization reduces them to their base form (lemma).

Example: pony → ponies both map to the lemma pony.

In our sentence, the only change is:

is → be

This helps the computer treat different forms of the same word as a single concept.

Step 5: Identifying Stop Words

Some words—such as “the”, “and”, “a”—occur frequently but add little meaning in statistical analysis. These are stop words.

Projects may use predefined lists, but these vary by context. For example, a search engine for rock bands wouldn’t want to ignore “The” because of names like The The.

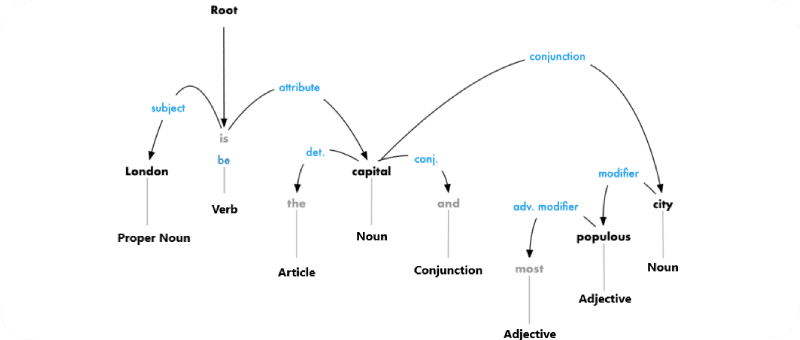

Step 6: Dependency Parsing

Here we identify how words relate to each other. The goal is to build a dependency tree where each token has a parent and a relationship type.

The tree helps reveal meaning—for instance:

“London” is the subject

“capital” is linked through “be”

This tells us: London is the capital.

Following branches further can reveal relations like “capital of the United Kingdom.”

Step 7: Named Entity Recognition (NER)

Now we identify which tokens refer to real-world entities. In the sentence:

London … England …

NER tags them as:

London → Geographical entity

England → Geographical entity

Modern systems use context, not just dictionaries. They can distinguish Brooklyn (the city) from Brooklyn Decker (the actress).

Common NER categories include:

Names of people

Organizations

Locations

Products

Dates and times

Monetary values

Events

This step enables structured data extraction from plain text.

Step 8: Coreference Resolution

Even with all previous steps, pronouns remain a challenge. Consider:

It was founded by the Romans, who named it Londinium.

The word “it” refers to London, but since models analyze sentences independently, they need an extra step to track these references.

Coreference resolution identifies when multiple words refer to the same entity.

Once resolved, the system understands that:

It → London

This greatly improves the completeness of the extracted information. Coreference resolution is one of the hardest NLP tasks. Deep learning models have improved accuracy significantly, but challenges remain.

Conclusion

These eight steps—sentence segmentation, tokenization, part-of-speech tagging, lemmatization, stop-word identification, dependency parsing, named entity recognition, and coreference resolution—transform raw text into a structured, analyzable form. This is the foundation of how NLP helps computers understand human language.